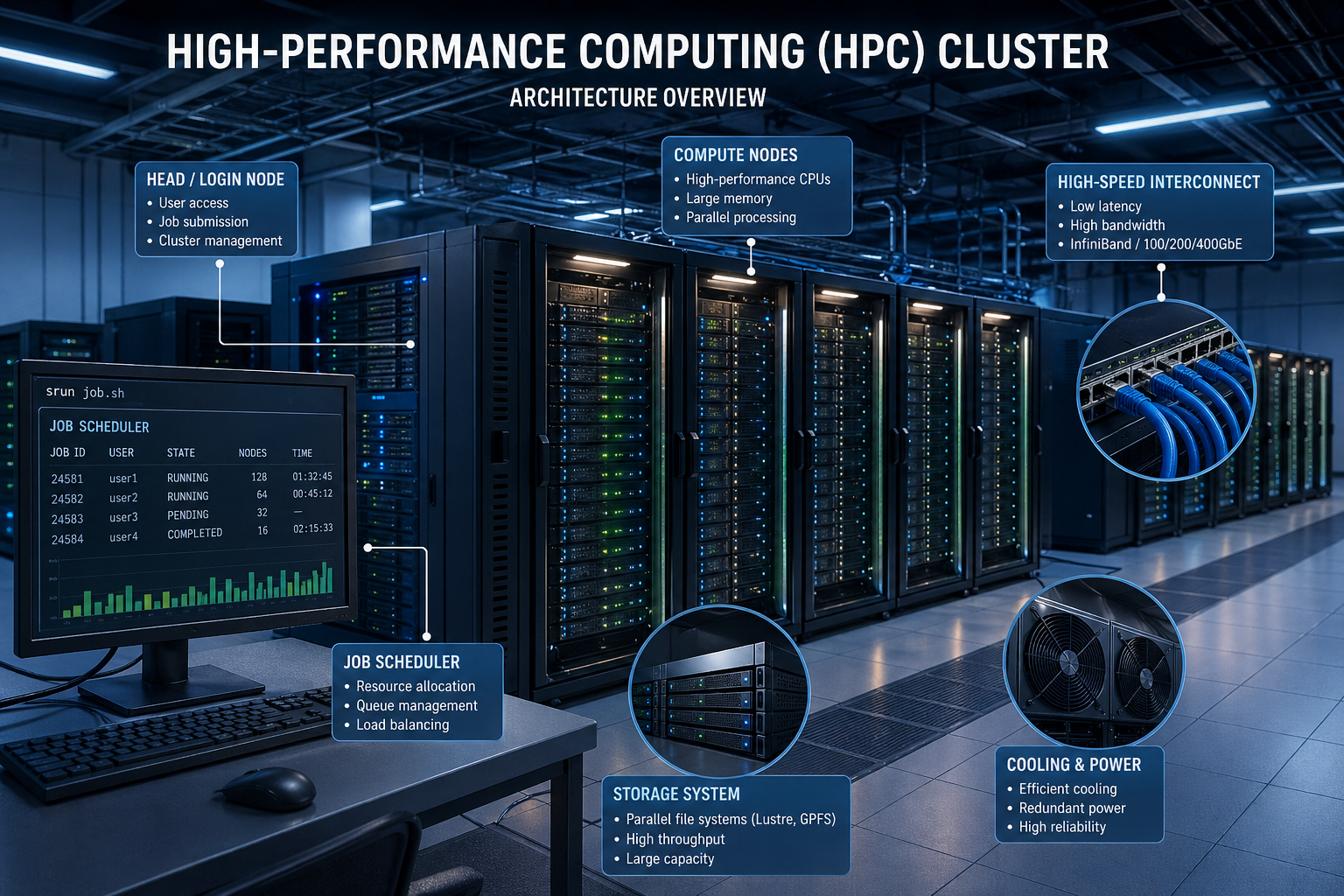

What is High-Performance Computing (HPC)?

High Performance Computing (HPC) architecture refers to the design and structure of systems that use multiple interconnected computers to perform complex computations at extremely high speeds. Unlike traditional computing, HPC systems distribute workloads across multiple nodes, enabling parallel processing at scale.

Understanding High Performance Computing (HPC) architecture is essential for industries such as artificial intelligence, scientific research, financial modeling, and big data analytics, where performance and scalability are critical.

High Performance Computing (HPC) Architecture Overview

Modern High Performance Computing (HPC) architecture is built around three core layers that work together to deliver extreme computational power:

Compute Layer

Responsible for processing data using CPUs, GPUs, and accelerators.

Network Layer

Provides high-speed communication between nodes using low-latency interconnects.

Storage Layer

Ensures fast and scalable access to large datasets across the cluster.

The efficiency of any High Performance Computing (HPC) architecture depends on how well these layers are integrated.

Key Components of High Performance Computing (HPC) Architecture

A well-designed HPC architecture includes several critical hardware components, each playing a specific role.

Compute Nodes in HPC Architecture

Compute nodes are the backbone of any HPC cluster. Each node is a server that contributes processing power to the system.

Types of Compute Nodes

- Standard Nodes – General-purpose CPU workloads

- High-Memory Nodes – Optimized for memory-intensive applications

- GPU Nodes – Designed for parallel workloads like AI and simulations

- Accelerated Nodes – Use FPGAs or ASICs for specialized tasks

Modern HPC architecture often combines multiple node types for maximum efficiency.

Head Node (Login Node)

The head node acts as the entry point into the cluster.

Responsibilities:

- User authentication

- Job submission

- Cluster management

It is a control node and does not handle heavy computations.

Job Scheduler

The scheduler manages how workloads are distributed across the cluster.

Common Tools:

- SLURM

- PBS

- IBM LSF

Functions:

- Resource allocation

- Queue management

- Load balancing

Schedulers are essential for maintaining efficiency in HPC architecture.

High-Speed Interconnect

The network fabric connects all nodes in the cluster.

Key Features:

- Low latency

- High bandwidth

- High throughput

Technologies:

- InfiniBand

- High-speed Ethernet (100/200/400GbE)

Network performance is a critical factor in HPC architecture, especially for parallel workloads.

Storage Systems

Storage in HPC environments must support high-speed data access and massive capacity.

Types of Storage:

- Parallel File Systems (Lustre, IBM Spectrum Scale)

- Local SSD/NVMe Storage

- Archival Storage (Tape/Object Storage)

Efficient storage design is a key part of any HPC architecture.

CPUs in HPC Architecture

CPUs handle general-purpose processing and coordination.

Features:

- High core counts

- Advanced vector instructions (AVX-512, SVE)

- Large cache sizes

Common CPUs:

- AMD EPYC

- Intel Xeon

GPUs and Accelerators

GPUs provide massive parallel processing power.

Advantages:

- Thousands of cores

- High memory bandwidth

- Efficient for AI and simulations

Modern HPC increasingly relies on hybrid CPU + GPU systems.

Explore GPU computing technologies: NVIDIA Data Center

Memory (RAM)

Memory is critical for performance in HPC systems.

Characteristics:

- High capacity (GB to TB per node)

- High bandwidth

- NUMA-aware configurations

Memory bottlenecks can significantly impact High Performance Computing (HPC) architecture performance.

Network Interface Cards (NICs)

NICs enable efficient communication between nodes.

Features:

- RDMA (Remote Direct Memory Access)

- Low latency

- Reduced CPU overhead

Cooling and Power Infrastructure

Large HPC clusters require advanced cooling and power systems.

Cooling Methods:

- Air cooling

- Liquid cooling

- Immersion cooling

Power:

- High-density racks

- Redundant power supplies

How High Performance Computing (HPC) Architecture Works

The workflow inside an HPC system follows a structured process:

- User connects to the head node

- A job is submitted to the scheduler

- Resources are allocated

- Tasks are distributed across compute nodes

- Nodes communicate via high-speed interconnect

- Data is processed and stored

- Results are returned to the user

This workflow highlights the importance of efficient High Performance Computing (HPC) architecture design.

HPC vs Traditional Computing

| Feature | Traditional Computing | HPC |

|---|---|---|

| Architecture | Single system | Cluster |

| Processing | Sequential | Parallel |

| Performance | Limited | Extremely high |

| Scalability | Vertical | Horizontal |

Real-World Applications of HPC

High Performance Computing is used in:

- Weather forecasting

- Scientific simulations

- AI and machine learning

- Financial modeling

- Bioinformatics

See global HPC systems rankings: TOP500 Supercomputers

Conclusion

High Performance Computing (HPC) architecture is the foundation of modern large-scale computing systems. By combining compute nodes, high-speed networking, and scalable storage, HPC enables organizations to solve complex problems faster than ever before.

As data continues to grow, optimizing High Performance Computing (HPC) architecture will remain critical for performance, scalability, and innovation.